Table of Contents

Should ticket deflection really be a relevant success metric in customer education? It’s tempting to say “yes” because fewer questions indicate customers are better educated. And of course, leadership likes seeing numbers as proof that the program’s paying off.

However, Kristine Kukich, a seasoned customer education expert, has a different, more nuanced way of reading the situation. Success isn’t reflected in fewer tickets, per se. It’s about getting better tickets.

When she’s not working on massive watercolor paintings and new crochet projects, Kristine helps SaaS teams connect the dots between what customers do in-product and what they ask across support, CS, and community.

She also contributes to the broader CE community through Customer Education Management Association (CEDMA) and runs The Training Sherpa and Mixology, where she shares practical strategies (including how to use AI without turning CE into a content factory).

I talked with Kristine about her typical day, how she quickly diagnoses a CE program, where AI tends to help, and how to build learning programs that are genuinely useful.

This article is a part of the “A day in the life of a CE expert” interview series. We launched the series because customer education folks are constantly solving the same problems in parallel. These interviews are a simple way to trade notes and spark conversations in the field.

Turning complex tickets into a roadmap

When training is working, customers will actually start asking more complex questions. But instead of simple how-to inquiries, they’ll ask things like: “We’re trying to set up this workflow for our team. Can you help us do it the right way?”

It’s a clear sign they’re moving from basic product usage and that they trust you enough to bring bigger goals to the table, shared Kristine:

“That’s when customer support transforms into customer success, when you stop troubleshooting and get more room to be proactive.”

These interactions can inform more advanced customer education programs and help you create a feedback loop. Do it right and, gradually, your customers become your product experts. Now, that’s exciting.

Kristine and I started talking about prioritizing educational content and deciding what to build next. While it’s true that every customer question is a data point, the ones that repeat shape a roadmap.

“When people ask the same question over and over again, it’s a red flag,” Kristine says. “Either we don’t have the right content, or the content exists and no one knows about it. AI often helps us detect those patterns, and then it’s on us to revise, retag, or resurface what’s missing.”

So yes, track ticket deflection if leadership insists. But the real value is in the patterns. Keep a tab on what keeps coming up, where customers get stuck, and what they’re trying to achieve when they reach out.

Once you treat those questions like product feedback for your learning program, the next step becomes more obvious. And you should give yourself a pat on the back if they’re getting more difficult to address in a chat window.

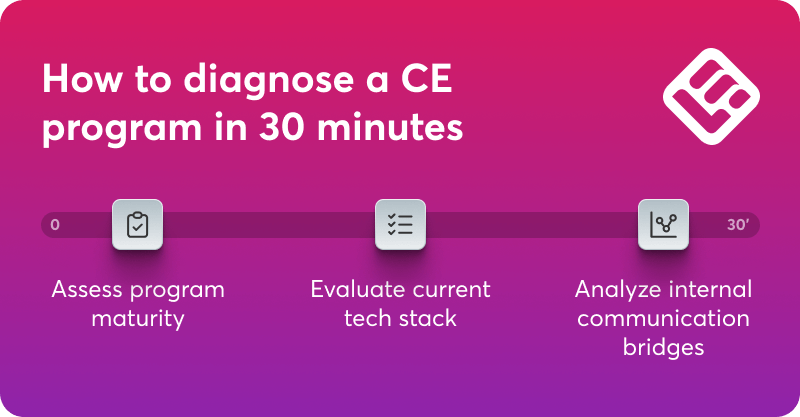

How to diagnose a customer education program in 30 minutes

When Kristine Kukich drops into a customer education program, she’s listening for signals that tell her what’s realistic to improve right now, and what will keep breaking until the foundations are fixed.

She starts by assessing maturity. There is no standardized scorecard for this task. It’s more like a quick read of the program’s current operating rhythm:

“It’s not a standard checklist. I’m looking at things like: what levels of content exist, how it’s distributed, and how often you’re realistically able to update it.”

Maturity matters because it sets the constraints. In Kristine’s words, it “dictates your options,” and once you see the maturity level clearly, you can “predict what will work better at different stages.”

Next, she checks the internal communication bridges between customer education and the rest of the organization. The programs that scale aren’t operating in isolation. “The programs that really succeed build communication bridges across the organization,” she said, including across post-sales and “up and down the org chart.”

This is often where momentum gets created, because customer friction shows up in different places. Support sees one picture, CS sees another, and product usage sees yet a third, and none of them realize they’re frames from the same movie.

Then comes technology. Kristine looks at what tools teams are using and what they’ve trained themselves to do inside those tools: “I look at the tools you’re using and the way you’re using them.”

She’s seen teams stretch tools far beyond what they were built for. The thing is, people default to the tools they know, then build important workflows inside them, even when the tool was not designed for it. It comes down to habit and sticking with what feels familiar.

Kristine shared a story that’s become her go-to example for what happens when tools start calling the shots. She once met a person who had actually built their resume in Excel.

For someone like me, who lives in Canva and thinks visually, that’s almost impossible to imagine. But to the person who did it, it probably made perfect sense. They knew Excel in and out, and they could shape it into what they needed.

The problem is, sometimes it pays off to go outside of your comfort zone and accept the learning curve that comes with mastering a new purpose-built tool. It’s a long-term investment, really.

Why customer education and marketing go hand in hand

It’s been years since Kristine started having regular weekly chats with people across customer education, customer marketing, and post-sales (CS, support, leaders, individual contributors):

“It’s now three or four conversations per week, on average. In the past, those calls ended the same way: We should have recorded that.”, said Kristine.

Back then, she said, you didn’t have an AI companion running in the background and capturing the good bits, so a lot of practical insight just disappeared. What a shame!

“At one point, I thought to myself: Well, why don’t we record it, really?”

She brought the idea to Shannon Howard and, before you know it, they started recording vidcasts and sharing notes in public.

The name Mixology is her shorthand for the bigger point. Customer education and customer marketing mix together as scale engines for customer success, especially once a company grows and post-sales expands.

Growth increases demand, and humans don’t magically multiply. Kristine asked what happens when teams get stuck being reactive (for example, training people one-by-one, creating endless videos, answering the same how-to questions) while the org is trying to scale:

“Education and marketing work well together here because they both move customers forward at scale. Education builds capability, and customer marketing creates momentum that keeps that capability in motion.”

When those two run together, post-sales gets breathing room. Kristine frames it as creating the foundation that lets CS and support focus more on the trust side “as we grow in our relationship with our customers.”

And this ties straight back to her “better tickets” idea. Customers come to you with richer context and bigger goals, which is exactly what you want to happen.

How AI connects learner intent across systems

If you work in customer education, you’re already sitting on a goldmine of learner intent. It’s just scattered. Customer success logs calls, support handles specific problems, community threads capture the real-world workarounds, and product analytics shows where users hesitate.

The hard part is turning all of that into one picture you can act on, without needing a dedicated analyst.

That’s the shift Kristine gets excited about:

“I can now leverage content from support and from customer success in ways that I would have never been able to do without a business analyst in years past. Thanks to AI, I get a gap analysis in my content versus the conversations that they’re having.”

Kristine’s view is refreshingly grounded about what AI is good for here. Yes, it helps teams move faster, but she’s especially interested in how it supports quality and diagnosis:

“Most of the progress we’re seeing is in time to production, but leveraged as a QA tool, AI can analyze gaps in production.”

AI can direct your attention to connections that are not obvious from a single glance. That’s valuable, but it still needs guardrails.

AI in customer education needs a reliable framework

Once AI enters the workflow, it becomes easy to mistake speed for progress. Content gets produced faster, assessments appear in minutes, and everything looks “done” earlier than it used to. The problem is that AI can make mediocre work look confident.

Kristine put it clearly:

That’s why she keeps coming back to the importance of frameworks. A framework gives you a shared definition of quality: what “good” means for your learners, what gets reviewed, and what standards content needs to meet before it ships.

She’s also clear that AI fluency doesn’t happen just because the tools are available:

“We can’t assume people know how to navigate AI just because they have access to it. We need to teach them the terminology, how to structure evaluations, how to frame prompts. That kind of fluency takes intention. It doesn’t happen by accident.”

And it’s not only customers who need that support. Teams do too. Kristine’s warning is aimed at leaders who think AI is a headcount replacement:

“Some companies are cutting headcount assuming AI will pick up the slack, but the work doesn’t really disappear. It just changes shape. We still need people who can interpret data, structure content, and ensure quality. The need for expertise doesn’t go away.”

While AI can speed up a lot of the work, Kristine’s point is that it doesn’t lower the bar for thinking. If anything, it raises it. When content can look “finished” without being useful, you need clearer standards, better evaluation habits, and people who can protect quality.

In the end, the programs that stand out will be the ones that stay close to real customer intent and keep turning it into learning that actually helps someone do the job.

Kristine is working on two upcoming whitepapers: one on creating a cross-functional Content Council to fix fragmented content governance, and another on using multiple gen-AI tools to scale customer stories from a single interview. She’s also involved with CEdMA’s empowerED26 conference happening in Austin, Texas. You can connect with Kristine via LinkedIn.

Mia has 10+ years of experience in content and product marketing for B2B SaaS. She’s been learning online ever since she got internet access. In 2021, she helped build the customer academy for Lokalise, the leading localization platform. Her background in Comparative Literature taught her to think deeply about stories, ideas, and what truly connects people. She writes about books, learning, humans, AI, and technology.