Table of Contents

Key takeaways

- LMS reports answer specific questions. Knowing which report to pull for which question is more valuable than having access to every report your platform offers.

- Pair LMS data with a business metric. Your LMS tells you what happened during training; an external performance metric tells you whether it had an impact.

- Avoid the most common reporting oversights. Small setup mistakes can undermine data you rely on.

Most L&D and HR managers can tell how many employees enrolled in a course and how many completed it. Far fewer can tell who is behind or which team has a knowledge gap, and what to show leadership when they ask whether training is working.

The reports are there. The problem is knowing which one to pull for which question, and what to do once you have the answer.

This guide skips the full taxonomy of every LMS report available. Instead, it maps the five most important report types to the questions they actually answer, explains what good data looks like versus what is misleading, and shows how to move from a number on a screen to an action.

What LMS reports actually tell you (and what they don’t)

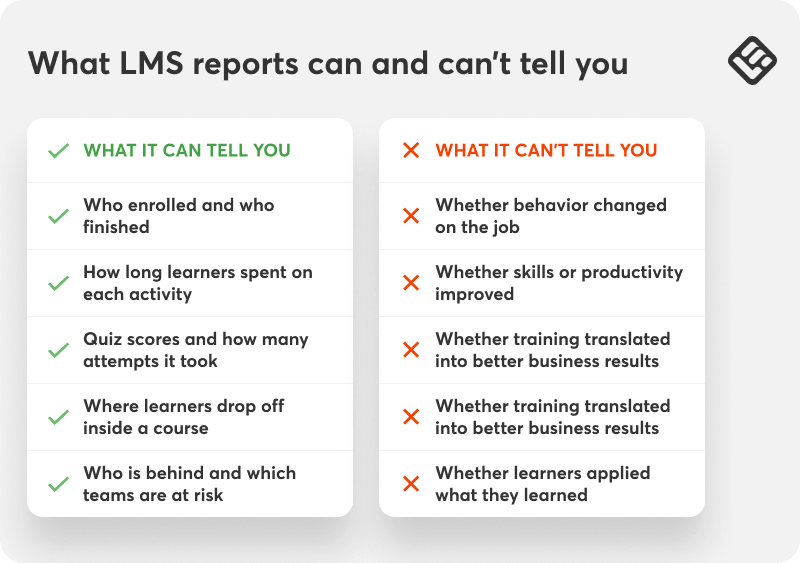

LMS reporting allows you to understand the learning and engagement status of employee training:

- See who is engaged, who is falling behind, and whether people are completing training on time

- Spot where learners stall or drop off so you can improve your content

- Pull and share data fast without spending hours in spreadsheets

What it can’t do is measure business impact on its own. LMS analytics can’t tell you whether:

- Training changed behavior on the job

- Employee skills and productivity actually improved

- Business metrics moved

This connection requires external data, like manager observations, performance reviews, sales numbers, error rates, layered alongside your LMS data.

Keeping that boundary in mind will help you read the reports that follow accurately and avoid the most common ways LMS data gets misread.

👉 If you’re using LearnWorlds, the Report Center gives you customizable reports with over 95 filters, saved segments, scheduled delivery (for some report types), and a Training Matrix for a visual overview of learner progress across courses.

Find out more about LearnWorlds analytics and reports.

The five LMS reports that matter most, and what question each one answers

Each report below is organized around the question it actually answers, what to look for in the data, and what to do next because a number on a screen is only useful if it leads somewhere.

1. Completion report

“Has everyone who should have done this training actually done it?”

| Completion report | |

|---|---|

| What it shows | Who enrolled, who finished, who is in progress, who hasn’t started |

| Who uses it | L&D/Training manager, HR manager |

| Why they use it | Tracking overall training coverage, compliance evidence |

Most LMS dashboards lead with a completion rate—let’s say, 80%. That number alone doesn’t tell you much unless you take into account the headcount behind it. In a 10-person team, 80% means two people haven’t finished their training. In an organization of 200, it translates to 40, which is a much wider gap that needs to be addressed.

It’s also worth separating people who started but stalled, from those who never opened the course at all. Someone three-quarters through will likely finish with a nudge. Someone who hasn’t logged in since enrolling two weeks ago may have a scheduling conflict or simply not know the training is required or has a deadline.

2. Progress report

“Who is on track and who is falling behind?”

| Progress report | |

|---|---|

| What it shows | Completion percentage per learner, last active date, sections visited |

| Who uses it | L&D/Training manager, Line Manager |

| Why they use it | Identifying at-risk learners early, spotting team-level patterns |

The progress report shows you what is happening inside a course in real time: how far each learner has gotten, when they last logged in, and which sections they have and haven’t reached.

The most useful signal is progress percentage combined with the last active date. A learner at 40% who was active yesterday is in a very different position from one at 40% who last logged in two weeks ago. The date is what tells you who actually needs attention.

Filter by team rather than viewing the whole organization at once. Most LMS platforms let you save that filtered view so you don’t have to rebuild it each time. Check this report at the halfway point of your training window so you have time to reach out to learners falling behind.

3. Assessment report

“Do learners actually understand the material, or did they just click through it?”

| Assessment report | |

|---|---|

| What it shows | Scores per learner, pass/fail rates, performance per question |

| Who uses it | L&D/Training manager |

| Why they use it | Validating knowledge retention, identifying content gaps |

The assessment report shows how learners performed on quizzes and exams: scores per learner, pass and fail rates, attempts, and in most platforms, how they answered each individual question. Use this report to review and improve your content, not to judge individual learners.

The overall pass rate is useful, but the question-level breakdown is where you find the most actionable information. If 60% of learners are failing the same question or assignment, the issue is likely in the content, not the learners. It could be a concept that wasn’t explained well or an ambiguous question.

4. Compliance training report

“Are we covered? And can we prove it?”

| Compliance training report | |

|---|---|

What it shows | Completion status and dates for mandatory courses, who is overdue |

| Who uses it | HR manager, L&D/Training manager |

| Why they use it | Regulatory evidence, tracking mandatory training deadlines |

The compliance training report ensures you canprove to an external party that specific people completed specific, mandatory training before a specific deadline. It’s a record you produce and store, not a dashboard you check. The fields that matter are learner name, course name, and completion date.

If your compliance training requires recertification, track certificate issuance dates and set a reminder process to identify learners whose certificates are approaching expiry.

5. Engagement report

“Are learners actually consuming the content, or just opening it?”

| Engagement report | |

|---|---|

| What it shows | Video watch rates, time spent per module, drop-off points, last activity date |

| Who uses it | L&D/Training manager |

| Why they use it | Content review and iteration, identifying where learners disengage |

Anyone can mark a course complete without really engaging with it. Engagement metrics show you where learners are actually spending time, where they slow down, and where they stop.

For example, if most people drop off at the same point in a course, that shows something there isn’t working: the material is too dense, the format shifts, or the module runs too long for the context people are learning in.

Use these metrics when you’re reviewing or updating content to prioritize accordingly.

The right report for the right person at a glance

The five reports above cover different questions and different audiences. When you need to decide quickly which one to pull, use this as your reference.

| Primary question | Report to use | Stakeholder | |

|---|---|---|---|

| Who is behind across the organization? On which programs? | Progress report + completion report | L&D/Training Manager | |

| Are my people on track? Who do I need to follow up with? | Completion report filtered by team | Line Manager | |

| Are we compliant? Can we prove it? | Completion report with dates, exported and stored externally | HR Manager | |

| Is training happening and is it working? | Completion rate + assessment scores + engagement summary | Department Head/HR Manager | |

| Is the content working or does it need revision? | Assessment report + engagement report | L&D/Training Manager | |

A note on automated delivery:

Most LMS platforms, including LearnWorlds, let you schedule certain reports to be sent automatically to specified recipients on a regular cadence. Instead of pulling and sending data manually, you set it up once and it runs in the background.

Learn more about scheduled reports in LearnWorlds.

How to measure training effectiveness using LMS vs business data

When leadership asks if training is effective, they don’t mean whether people finished it or how much they scored in the final exam. They want to know whether it had an impact on the business.

The approach is simple: for each training program, identify one business or performance metric you expect it to influence. Measure it before the program, and again at 30, 60, and 90 days after completion.

Some examples of what this looks like in practice:

- Safety program: incident or error rates before and after

- Employee onboarding: time-to-productivity for new employees

- Sales skills enablement: time-to-close or revenue per rep before and after

- Product knowledge: ticket resolution times, CSAT scores, or sales (depending on the team)

LMS analytics give you the learning and engagement data that HR and L&D departments care about. Your HR system, CRM, or operational dashboards demonstrate the business impact leadership cares about. Put them side by side and you have the complete picture.

Five common mistakes in training reporting and how to avoid them

Even with the right reports in place, a few common oversights tend to undermine the data. These are the ones worth watching for.

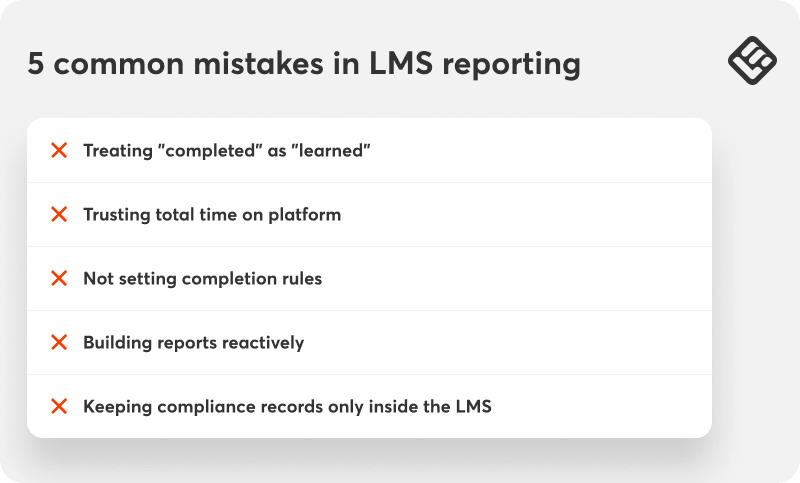

Treating “completed” as “learned”

Completion is one of the easiest training and development metrics to track, which makes it tempting to treat it as the primary measure of success. But finishing a course and learning from it are not the same thing. If you want to have a better understanding of how much employees have retained, check assessment scores and pass rates as well.

Trusting total time on platform

This figure includes time spent with a browser tab open but idle. It overstates active learning and will make your training programs look more engaging than they are. For more accurate measurement, check time spent inside the course player or at the module level too.

Not setting completion rules

If you don’t set completion rules (no minimum watch time on videos, no passing score on quizzes) a 95% completion rate just means 95% of learners opened the course. It tells you who clicked through, not who learned anything.

And while that may not matter much for low-stakes training, like soft skills, it matters a great deal for safety, compliance, or any program where incomplete learning carries real risk.

Building reports reactively

At the start of each program, set up and save the filtered views you’ll need by team, course, completion status, or whatever is most relevant to stakeholders. That way you can retrieve your report in one click.

Keeping compliance records only inside the LMS

Completion records inside the LMS are tied to the course being active and the account remaining in good standing. If a course gets archived or a plan changes, those records may become difficult or impossible to access. To avoid losing valuable compliance proof, export completion data with dates and store it somewhere independent of the platform.

Start with the question, not the report

The five reports covered here handle the vast majority of what L&D and HR teams actually need to track employee training: who has finished, who is falling behind, whether people learned anything, whether you can prove compliance, and whether the content itself is working.

Pick the report that matches your most pressing question right now. Build the infrastructure filters, segments, and scheduled reports before your next program launches, so your data is there when you need it.

LearnWorlds for employee training helps businesses onboard, train, and upskill their employees while giving them the data to monitor their program’s performance. Try LearnWorlds now with a 30-day free trial.nstead of building it from scratch every time someone needs to check in.

FAQs

The most reliable method is an LMS with built-in reporting, like LearnWorlds. The starting point is a completion report filtered by course and team showing who enrolled, who finished, and who hasn’t started. For ongoing tracking, set up automated scheduled reports delivered to line managers on a regular basis so that follow-up happens proactively.

On-the-job training is harder to track in an LMS because it happens outside the platform. The closest proxy is a combination of post-training assessments to confirm knowledge was retained and observation checklists completed by managers or supervisors. Both can be built directly inside your LMS as a quiz or form activity so the record sits alongside the rest of the learner’s training data.

Measurement operates at three levels: activity (did they complete it?), knowledge (did they learn ?) and impact (did it change anything?). LMS reports cover the first two through completion and engagement data and assessment scores.

Measuring impact requires connecting training to an external business metric, such as error rates, support tickets, or performance review scores, by comparing performance before and after the training program.

LMS assessments allow you to measure employee understanding and retention, while reports track attempt/pass rates, scores, and number of certificates issued. LMS metrics do not tell you whether the skill has been applied on the job.

To track whether employees have applied skills on the job, pair LMS reports with manager observation records or pre/post performance assessments built into the training program.

Completion rates and assessment scores tell you only part of the story. To measure whether training actually worked, define one business metric you expect it to influence: incident rates for a safety program, time-to-productivity for onboarding, revenue per rep for sales training. Then, measure this metric before the program and again at 30, 60, and 90 days after.

The specific steps vary by platform, but the general process is:

1. Go to Reports or Analytics: Find this in your LMS main navigation or sidebar.

2. Select the report type: Progress, completion, assessment, engagement (choose what you need).

3. Apply filters: Filter by course, date range, team, or user group to narrow the data.

4. View, export, or schedule: View live in the platform, export as CSV or Excel, or schedule automatic delivery to an email address.

5. Save as a segment: Most platforms let you save a filtered report by name so you can retrieve it with one click next time.

Androniki Koumadoraki

Androniki is a Content Writer at LearnWorlds sharing Instructional Design and marketing tips. With solid experience in B2B writing and technical translation, she is passionate about learning and spreading knowledge. She is also an aspiring yogi, a book nerd, and a talented transponster.